A detailed look at the engines, data structures, and security architecture that power the Digital Assets product line.

When financial institutions, fintechs, and enterprises begin working with blockchain, they quickly discover that the hard part is not writing a smart contract. The hard part is everything around the smart contract. How do you manage thousands of customer wallets securely? How do you track which on-chain token belongs to which internal account? How do you reliably react when a deposit arrives on-chain and update your off-chain records atomically? How do you ensure a single faulty transaction does not create an unreconcilable discrepancy in your books?

Kaleido Digital Assets is the layer that answers these questions. It sits above the smart contract and the chain, providing a structured, auditable, policy-enforced system for managing the complete lifecycle of digital assets from issuance through transfer, custody, reporting, and redemption.

The product is designed to support a wide range of asset categories:

- Financial Assets: securities, funds, ETFs, CBDCs, stablecoins, and related instruments

- Supply Chain Assets: tracking information, bills of lading, delivery verifications, provenance records

- ESG Assets: carbon credits, emissions tokens, verifiable sourcing certificates

- Collectible Assets: fine art, loyalty program points, in-game balances

What unifies all of these use cases is that they require the same underlying infrastructure: reliable event detection, structured data management, policy-governed transaction execution, and secure key custody. Digital Assets provides all of this through a set of composable, configurable building blocks rather than rigid, use-case-specific templates.

Key distinction

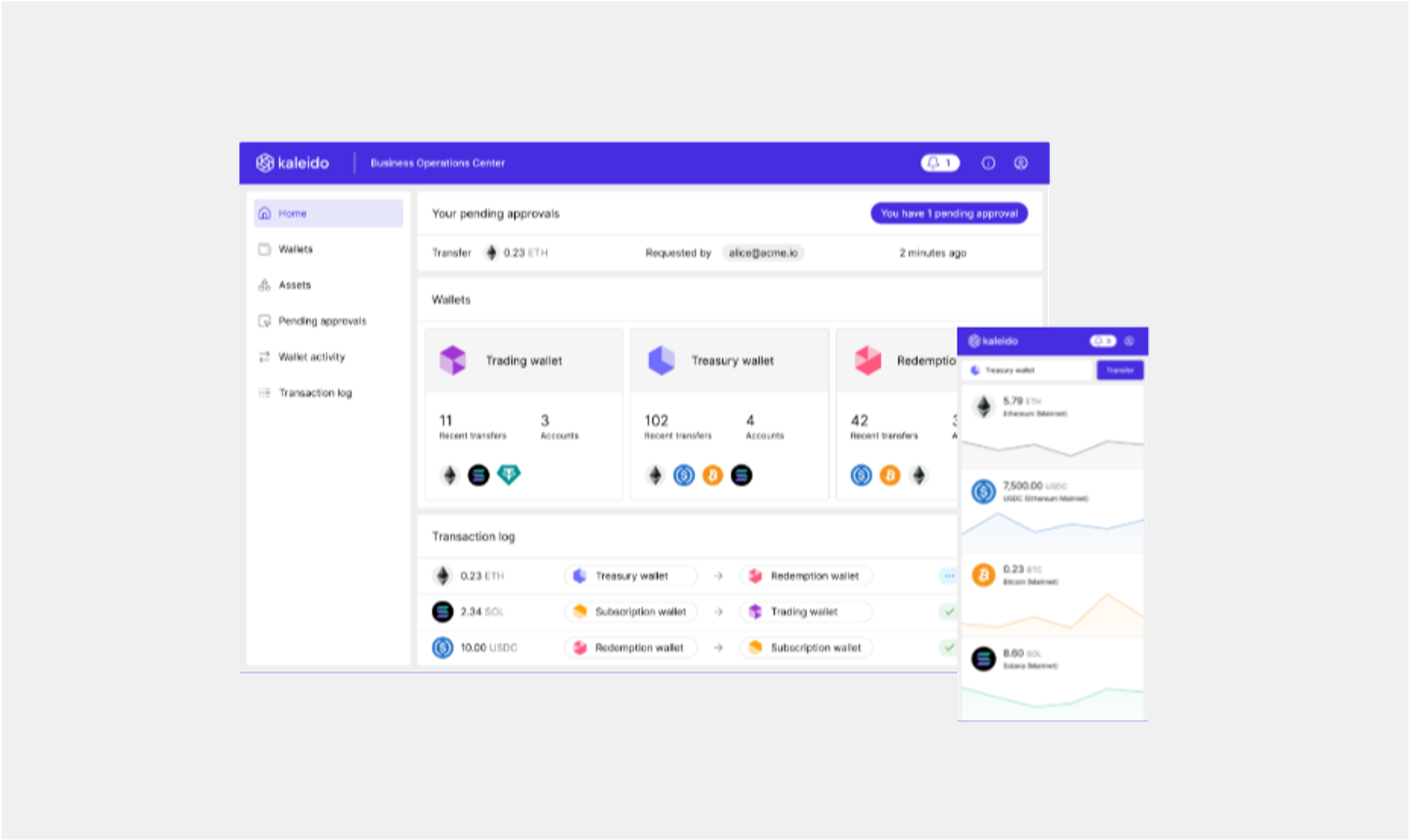

Digital Assets is not a product you configure once and hand off to users. It is a runtime platform, a continuously running set of engines that process events, execute workflows, and maintain a queryable state of your asset universe. Your enterprise applications talk to it via REST APIs; they do not interact with the blockchain directly.

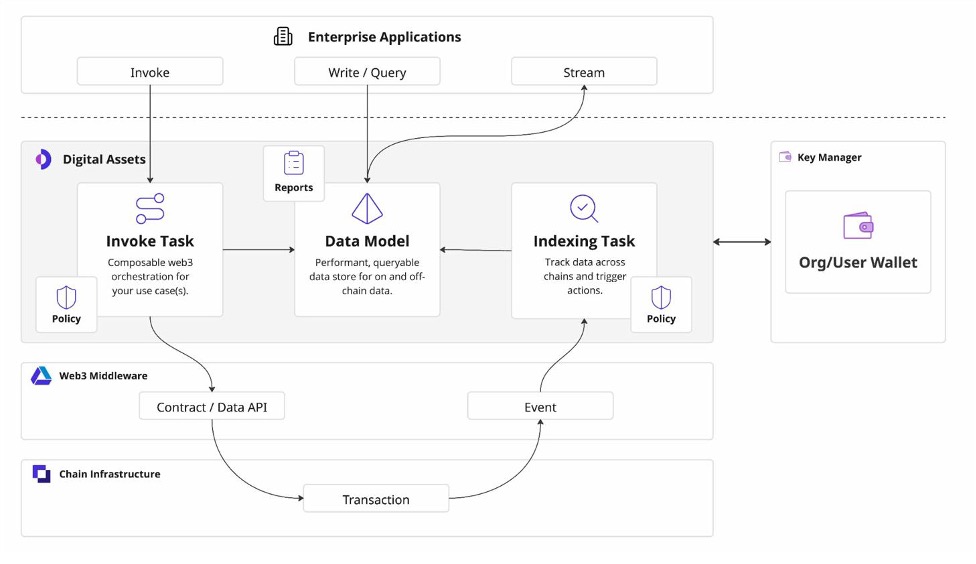

The diagram below shows how the Digital Assets product is positioned within the broader Kaleido platform stack. Enterprise applications sit above the Digital Assets layer and interact with it in three ways: they invoke operations (triggering workflows that write to the blockchain), they write and query the Data Model (reading and enriching asset state), and they stream events from the Indexing layer (reacting to on-chain activity in real time).

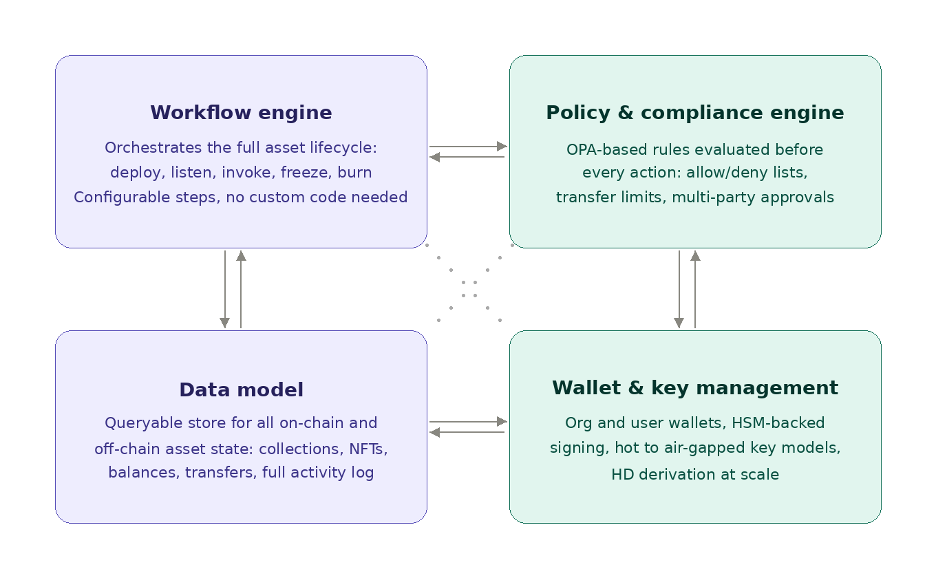

Four capabilities work together inside the Digital Assets boundary:

Figure 1. The four core capabilities of the Digital Assets product, working in concert.

These engines communicate with the chain through Kaleido's Web3 Middleware layer, which handles the complexities of JSON-RPC, transaction nonce management, gas estimation, and event subscription. This makes the Digital Assets product chain-agnostic: the same workflow logic that runs against an Ethereum mainnet deployment can be pointed at a permissioned Hyperledger Besu network, Polygon, or other supported networks without rewriting your workflows.

The Workflow Engine is the operational core of Digital Assets. It governs the complete lifecycle of a digital asset from the moment a contract is deployed: standing up the listeners and indexing configuration that track it on-chain, applying the policy framework that controls who can do what, managing allow and deny lists, and executing the operational actions (mint, transfer, burn, freeze, pause) that move an asset through its life. When your application needs to act on an asset, it is orchestrating against this engine, which ensures every action is asset-aware, policy-enforced, and fully audited.

The key design choice here is that invocation is not a single function call. It is a sequential pipeline of named steps, each performing a discrete action and passing data forward into a shared context. Think of it as a serverless function with explicit state management between steps.

Each step in a workflow can perform one of a range of actions: make an HTTP request to an external system, query the Data Model, apply a data transformation to reshape inputs, call a smart contract function via the Contract API, or evaluate a policy. Steps communicate through a shared context store, an in-memory key-value map that accumulates outputs as the pipeline runs. A later step can read the output of any earlier step.

Steps return one of three status codes: Continue (proceed to the next step), Error (halt with failure), or Return (halt with success and a specified result). This enables conditional branching and early exit: if a compliance check at step two returns Return with a rejection, the workflow stops there and returns that result without ever reaching the blockchain.

Why this matters

In a traditional integration, the logic for "check balance, validate compliance status, call smart contract, update internal record" lives in application code, often spread across services requiring careful error handling and rollback logic. In Kaleido's invocation model, this sequence is a declarative configuration. The platform handles retry semantics, the blockchain submission, and the audit trail. Your application invokes the workflow and receives a result.

Each invocation workflow can have a Policy attached. Policies are authored in OPA (Open Policy Agent), an open-source, industry-standard policy language. Before a signing step executes, the Policy is evaluated against the full transaction context: who is initiating it, what parameters it contains, what time it is, what the current state of the Data Model shows. A policy denial stops the transaction before it reaches the chain. This is how you enforce business rules such as "transfer amounts above $1M require dual approval" at the platform level, not in application code.

One of the most practically important components of Digital Assets, and the one most often underestimated, is the Data Model. The blockchain is excellent at providing a tamperproof ledger, but it is a poor database. Querying "all NFTs owned by address X across three contracts deployed on two chains" from raw blockchain data is slow, expensive, and complex.

The Digital Assets Data Model solves this. It is a high-performance, structured data store maintained by the Indexing Engine, queryable by enterprise applications via REST APIs, and designed to represent the full state of your asset universe in a format useful for applications rather than chain explorers.

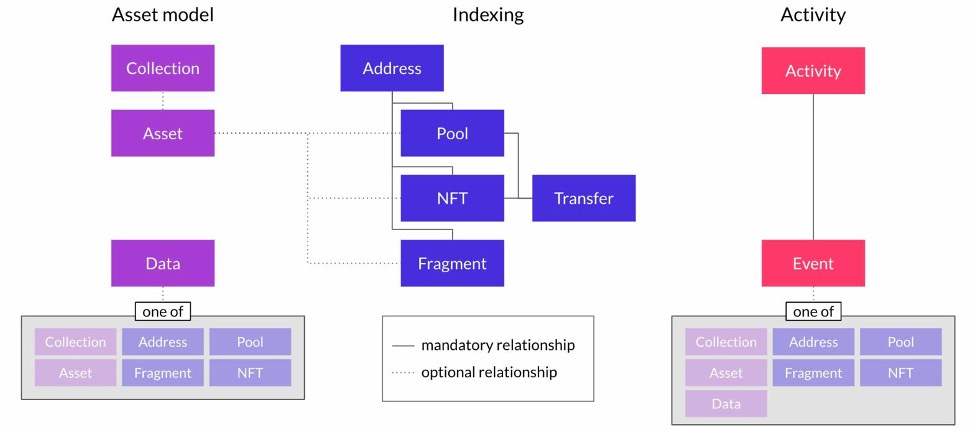

Figure 2. The three domains of the Digital Assets data model: Asset Model objects (purple), Indexing objects (blue), and Activity objects (pink/red).

The data model is organized into three conceptual domains:

These represent what you own and manage. A Collection is a grouping equivalent to a smart contract or a related set of contracts. An Asset is a specific fungible or non-fungible token within a Collection. A Data record is supplementary metadata that can be attached to any other object type: an off-chain JSON blob, a document reference, or any enrichment your use case requires.

This model is deliberately abstract. A Collection could be an ERC-20 contract, an ERC-1155 multi-token contract, or a custom contract for permissioned securities. The platform does not force a specific token taxonomy. It provides the primitives and lets you map them to your operational reality.

These represent on-chain state tracked by the Indexing Engine. An Address is a wallet address. A Pool is a logical grouping of tokens, useful for fungible assets where ownership is tracked at the pool level. An NFT is a specific non-fungible token instance. A Fragment is a sub-unit of a fungible asset, used in sophisticated custody scenarios where a single on-chain balance is composed of multiple earmarked allocations (detailed further in the Omnibus Wallets section). A Transfer records the history of NFT movements.

These represent the audit trail. Every action flowing through the platform, whether from an invocation workflow or detected by the Indexing Engine, produces an Activity record associated with an Event. The Event references the specific entity it relates to: a Collection, Address, Pool, Asset, Fragment, or NFT. This gives you a complete, queryable history of everything that has happened across your entire asset portfolio.

The Data Model turns blockchain state, optimized for consensus rather than queries, into a first-class enterprise data asset that applications can use directly.

Every object in the data model supports custom metadata fields. This is where you store the off-chain enrichment that makes digital assets meaningful in a business context: the ISIN code on a tokenized bond, the supply chain origin data on a provenance token, the customer ID associated with a loyalty balance. The platform indexes these custom fields for query performance.

Blockchain financial assets commonly use very large integers to represent small decimal values. ETH, for example, is denominated in wei (10⁻¹⁸ ETH). The Data Model allows you to define named units and their decimal factors on a per-asset basis, so your application layer can work in human-readable denominations (such as "0.5 ETH") while the platform handles the integer representation on-chain. This is configurable per asset, not just per blockchain.

While the Invocation Engine pushes actions onto the chain, the Indexing Engine is the pull side: it continuously monitors the blockchain for events, decodes them, and acts on them. This is the mechanism that keeps the Data Model synchronized with on-chain reality.

Figure 3. The submission and indexing flow: operations submitted through the Invocation Engine are tracked through to confirmation, with the Indexing Engine picking up the resulting events to update the Data Model.

The Indexing Engine subscribes to the event stream provided by the Web3 Middleware layer, which handles the low-level mechanics of log filtering, block polling, and chain reorganization detection. From the Indexing Engine's perspective, events arrive as a reliable, ordered stream.

One of the most underappreciated challenges in building on blockchain is handling finality correctly. On a probabilistic finality chain such as Ethereum mainnet, a transaction that appears confirmed can be reorganized out of the canonical chain. An enterprise system that credits a customer's account immediately upon seeing one confirmation could be defrauded by a reorg.

The Indexing Engine lets you configure finality rules per chain: how many block confirmations are required before an event is treated as final, and what policy should apply to events that arrive before that threshold. You can maintain a "pending" state in the Data Model, trigger a different action for a pre-final event, and then update the state when full finality is reached, all configured declaratively.

Just as invocation workflows support policy evaluation, the Indexing Engine also supports policy checks on inbound events. When a deposit into a monitored address is detected, the engine can run a policy evaluation before updating the Data Model or triggering a downstream action. This is where you integrate KYT (Know Your Transaction) checks, AML screening, or custom business validation before accepting that a deposit is legitimate.

In a consumer context, a wallet is an app. In an enterprise context, a wallet is a security perimeter: it defines who controls which private keys, under what conditions, with what audit trail. Kaleido's approach to wallets is a first-class product concern, not an afterthought.

The platform supports two wallet scopes. An Org Wallet is a set of keys controlled by the organization, used for operational functions such as minting, burning, contract administration, and gas payment. A User Wallet is associated with a specific end-user identity within the platform, enabling per-user key management at scale.

For deployments that need to provision thousands of wallets (one per customer, one per counterparty), the Key Manager supports hierarchical key derivation (HD Wallets per BIP32/BIP39), meaning a tree of keys can be derived from a single root seed. This enables factory-scale key provisioning without a proportional increase in HSM operations.

All private key material is managed through Kaleido's Key Manager, a cross-platform component that abstracts over the underlying key storage technology. The Key Manager supports a full spectrum of security models:

Natively supported HSM vendors include Thales Luna, IBM OSO, Fortanix, AWS CloudHSM, GCP Cloud HSM, Azure Key Vault, and HashiCorp Vault. For any PKCS#11-compliant HSM not on this list, the platform's Remote Signing Module (RSM) provides a bridge: a lightweight component deployed co-located with your HSM that validates signing payloads and authorizes the hardware to sign, without Kaleido ever having access to private key material.

Security architecture principle

Kaleido does not generate or hold private keys on behalf of customers under any deployment model. Keys are generated within the customer's chosen secure environment. In HSM-backed configurations, generation occurs within the hardware security boundary. This is a foundational design principle, not a configuration option.

Many institutional digital asset operations use an omnibus wallet structure: a single on-chain address, or a small number of addresses, holding assets on behalf of many customers, with per-customer allocations tracked off-chain in a ledger. This is the standard model for custodians, exchanges, and asset managers. It is operationally efficient, fewer on-chain addresses means lower gas costs and simpler key management, but it introduces reconciliation complexity. How do you match inbound deposits to the right customer? How do you ensure the off-chain ledger stays in sync with on-chain reality?

Digital Assets has built-in support for this pattern through two mechanisms: Omnibus Wallets with Virtual Accounts, and Sweeping workflows.

An Omnibus Wallet in Kaleido is structured around a Virtual Account hierarchy. At the top level, a Virtual Account represents the aggregate holding: total assets under management. Below it, each customer has their own Virtual Account tracking their individual credit and debit history, producing a net balance.

To receive deposits, each Virtual Account can have one or more Deposit Accounts, the inbound identifiers used by customers to send funds. Deposit Accounts are flexible constructs. They can represent an on-chain wallet address (for UTXO-based or account-based chains), a destination tag or memo (for chains such as Stellar that route deposits through memo fields), or any other logical identifier that connects an inbound transfer to the correct customer record.

When a deposit arrives at a customer's Deposit Account address, it needs to be swept: consolidated into the omnibus holding wallet. This is not a simple transfer. It requires detection, verification, policy checks, and an on-chain consolidation transaction, all coordinated reliably. Kaleido's sweeping workflow automates this:

The Fragment construct is particularly important here. It is the mechanism that tracks sub-units of an on-chain balance independently. When ten customers each send ETH to different deposit addresses before the sweep runs, each inbound amount is a separate Fragment, tracked separately through the confirmation and sweep pipeline. No customer's deposit is confused with another's, and the audit trail is complete and unambiguous.

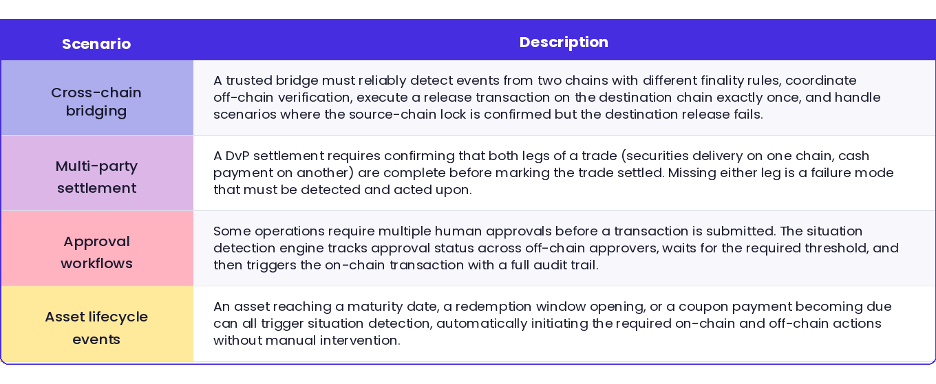

Standard indexing ("when event X fires, do Y") is sufficient for simple use cases. But many enterprise digital asset scenarios require coordinating multiple on-chain and off-chain events across multiple parties, with conditional logic, error handling, and exactly-once execution semantics.

Kaleido calls this Situation Detection, and it is provided by the orchestration engine built into the Asset Management Service. A "situation" is a complex state requiring multiple observable events, and possibly multiple actions, to resolve correctly.

The situation detection engine provides exactly-once execution semantics for off-chain actions, meaning that even in the face of system restarts, network interruptions, or transaction failures, the platform ensures that a triggered action is not duplicated. This is a deceptively hard problem in distributed systems, and the kind of infrastructure that most teams discover they need only after building their first production system without it.

Digital Assets supports a broad set of token standards out of the box. The platform is also smart contract agnostic: any ABI-compatible contract can be uploaded to generate a typed REST API, meaning your invocation and indexing workflows can be built around any on-chain logic you deploy.

On supported networks including Ethereum mainnet, Polygon, Arbitrum, Base, Avalanche, Hyperledger Besu, Stellar, Solana, Bitcoin, and Canton, these standards are available for direct deployment and management. Additional EVM-compatible networks can be onboarded through a governed process.

Putting these components together, a few representative architectures emerge. These are not the only possibilities, but they illustrate the range of what is in production today.

A regulated securities issuer deploys ERC-1400 or ERC-3643/T-REX contracts on a permissioned Besu network. Invocation workflows handle issuance, transfer, and redemption with policy enforcement that checks investor accreditation status before any transfer is approved. The Data Model maintains a real-time cap table. The Indexing Engine produces an Activity log that satisfies regulatory reporting requirements. The Key Manager with HSM backing ensures that the issuer's operational keys meet institutional custody standards.

A central bank or stablecoin operator manages an omnibus wallet structure across multiple reserve accounts. Deposit Accounts are provisioned per participating bank, with sweeping workflows consolidating inbound reserves. Virtual Accounts track each bank's allocation. Situation detection handles the multi-party reserve verification flow before new tokens are minted. All operations are audited end-to-end in the Activity log.

A commodity trader or logistics operator issues NFTs representing physical assets such as bills of lading, delivery events, and certification documents. Each stage of the supply chain (shipping, customs, warehouse receipt, final delivery) is an invocation workflow that updates the NFT state on-chain and enriches the Data Model with off-chain documentation. The Indexing Engine provides the counterparty-facing event stream so external parties can verify provenance without direct chain access.

A carbon registry or ESG program manager issues and retires carbon credits as ERC-20 tokens. The Indexing Engine detects retirement events and updates the Data Model to reflect cancelled credits. The Activity log provides the audit trail required by registry standards. Custom metadata fields on the Data Model store the project reference, vintage, and certification body for each credit, making the registry queryable by those dimensions.

If you are evaluating Kaleido Digital Assets for a specific use case, the most useful starting point is to map your requirements onto the building blocks described here: which on-chain events do you need to detect, what invocation workflows do you need to configure, and what does your Data Model need to look like? These questions have concrete answers, and the Kaleido team is well-positioned to help you work through them.

The invocation configuration interface, Data Model explorer, and Wallet management tools are all accessible from the Digital Assets section of the platform console. Documentation for each component is linked from within the product.

Your guide to everything from asset tokenization to zero knowledge proofs

Download Now

Learn how Swift, the world’s leading provider of secure financial messaging services, utilizes Kaleido in its CBDC Sandbox project.

Download Now

.png)